TL;DR

AI analytics verified SQL builds trust by making generated queries reviewable, auditable, and aligned with governance before answers are operationalized. AI analytics verified SQL is the difference between answer velocity and answer credibility.

AI analytics verified SQL is the difference between answer velocity and answer credibility. The direct answer: if teams cannot inspect generated SQL, they cannot reliably trust AI analytics outputs for important decisions. Reviewable SQL turns black-box responses into auditable analytics artifacts. It is the practical control that lets self-serve scale without turning analysts into cleanup crews.

This is not an abstract governance debate. It is an operating reality. The moment product, growth, and finance use AI-generated outputs in planning cycles, consistency and traceability become non-negotiable. That is why hallucination mitigation starts with SQL transparency and why mature teams treat transparency as default behavior, not an optional advanced setting.

Who this guide is for and not for?

- For: data leaders scaling AI-powered self-serve to non-technical teams.

- For: analysts who need auditability while reducing repetitive ticket work.

- Not for: teams comfortable with opaque answers in low-stakes exploratory workflows.

- Not for: organizations unwilling to define metric ownership and review rules.

AI analytics verified SQL: trust model comparison

| Trust layer | Opaque AI output | Partial SQL visibility | Verified SQL workflow |

|---|---|---|---|

| Analyst verifiability | Low | Medium | High |

| Decision reproducibility | Low | Medium | High |

| Cross-team alignment | Fragile | Variable | Strong with semantic ownership |

| Incident recovery | Slow | Moderate | Fast with query lineage |

| Best fit | Early demos only | Transitional workflows | Production analytics operations |

How verified SQL changes day-to-day operations?

Without verified SQL, analysts spend disproportionate time reverse-engineering outputs. With verified SQL, analysts spend time validating business logic and improving semantic definitions. That distinction matters. One mode creates constant rework; the other builds cumulative trust.

Teams that implement this well typically reduce request latency and correction loops at the same time.

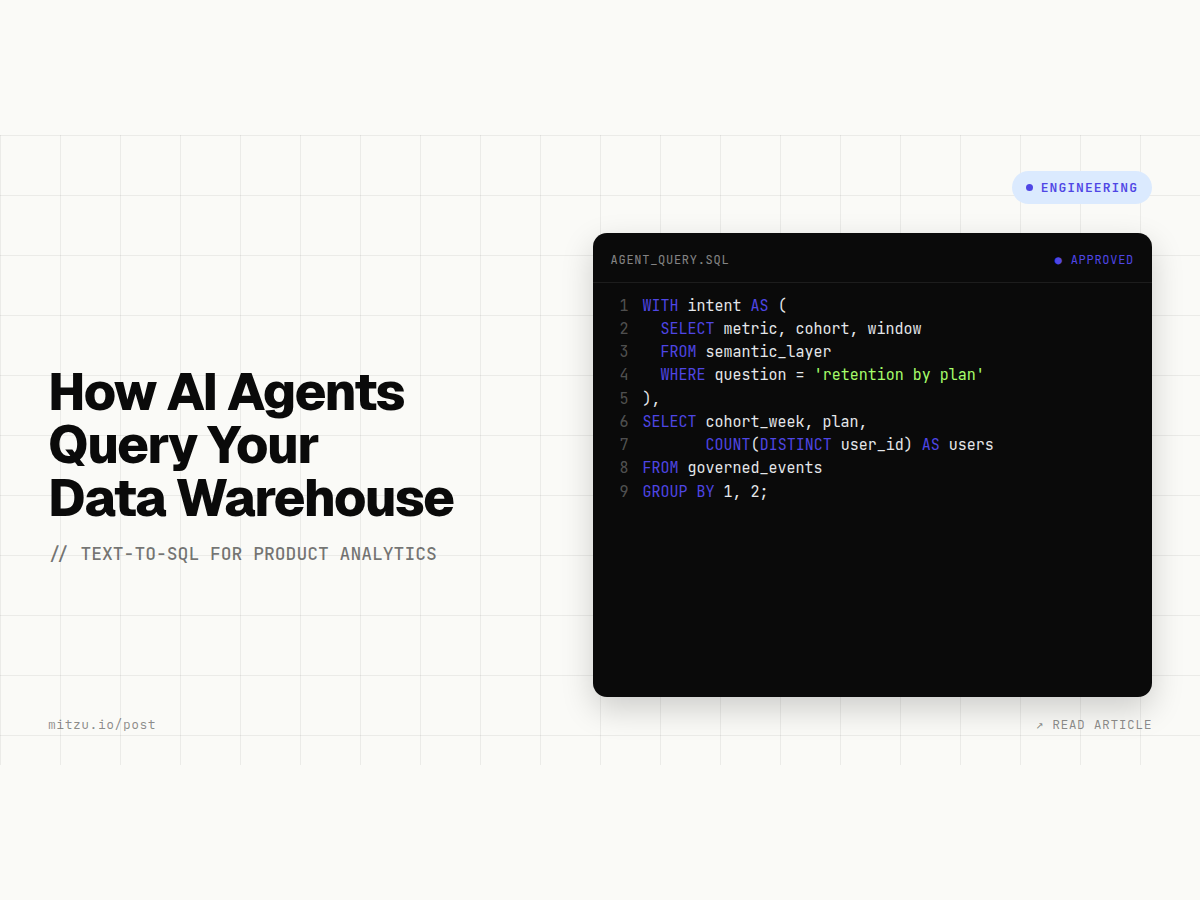

- Every answer includes the generated SQL and context by default.

- High-impact questions trigger analyst approval automatically.

- Rejected outputs are documented and fed back into semantic mappings.

- Approved patterns become reusable templates for future questions.

This workflow aligns naturally with structured NL-to-SQL evaluation and queue reduction patterns in analytics ticket-queue remediation. It also improves adoption in product analytics teams that need faster iteration without metric inconsistency.

"In AI analytics, SQL is not implementation detail. SQL is the receipt that makes answers defensible."

Practical trade-offs and limits to communicate early

Verified SQL does not eliminate all risk. If source models are weak, transparent queries will expose weak logic faster, which is helpful but sometimes uncomfortable. Teams should also expect temporary throughput friction while review standards mature. The payoff is durable: less ambiguity, faster correction, and stronger confidence in cross-functional decision meetings.

If you are selecting tools, pressure-test verified SQL behavior under real scenarios: ambiguous questions, conflicting definitions, and high-impact metrics. Pair this with LLM versus analytics-agent evaluation, then benchmark architecture fit on semantic-layer grounded principles. For incumbent migration paths, compare workflow constraints on Amplitude and Mixpanel.

Teams still defining category language can align stakeholders first using this agentic analytics explainer. Shared language reduces governance ambiguity and prevents rollout debates from collapsing into tool preference arguments.

Organizations building broader automation programs can connect this directly to AI agents strategy. Verified SQL gives those programs the governance backbone needed for long-term adoption rather than short-lived pilots.

What changes when verified SQL is adopted across departments?

In product teams, verified SQL tends to reduce argument loops around funnel interpretation because definitions can be inspected in context. In growth teams, it shortens campaign postmortems because analysts no longer need to reverse-engineer black-box outputs before discussing outcomes. In finance-adjacent workflows, it increases confidence when forecasts or variance explanations are challenged by leadership.

This is especially useful for organizations moving from static dashboard culture to conversational analytics. Without verified SQL, conversation quality often improves while decision confidence drops. With verified SQL, conversation quality and confidence can improve together because users understand how the answer was generated and where its boundaries are.

Implementation checklist for leaders introducing verified SQL

- Define which metric classes always require analyst approval before sharing.

- Ensure SQL is visible in both UI and exported answer channels.

- Train non-technical users on how to interpret query context at a high level.

- Create a lightweight rubric for approving, flagging, or rejecting outputs.

- Review rejected outputs monthly and update semantic definitions accordingly.

Teams that treat this checklist as optional usually see adoption stall after early excitement. Teams that operationalize it often report a healthier balance: fewer repetitive tickets, faster answer turnaround, and more consistent narrative quality in cross-functional planning reviews.

30-day implementation pattern for verified SQL

Week one should focus on visibility defaults: ensure every answer includes generated SQL and essential context fields like time window, key filters, and entity scope. Week two should define approval tiers for question classes. Week three should establish feedback capture for rejected outputs. Week four should publish trust metrics and adjust onboarding based on observed failure patterns.

This sequence is practical because it separates technical enablement from process adoption while keeping momentum high.

Many teams try to skip directly to broad rollout and then wonder why analysts distrust outputs. Verified SQL adoption requires shared expectations. Business users need to know when answers are exploratory versus decision-grade. Analysts need predictable escalation triggers.

Leaders need metrics that show trust is increasing, not just usage volume. A 30-day implementation pattern makes these expectations explicit and easier to maintain.

Trust metrics that matter more than query volume

- Answer acceptance rate by team and metric class.

- Correction rate for distributed answers.

- Median review time for high-impact queries.

- Share of answers reused in planning or reporting artifacts.

Sources

- BigQuery SQL syntax reference

- Snowflake fundamentals for governed analytics

- PostgreSQL query semantics tutorial

- Trino SELECT documentation

- OWASP Top 10 (risk-thinking baseline)

FAQ

What is AI analytics verified SQL?

It means AI-generated answers include reviewable SQL so analysts can validate business logic before decisions are made or distributed broadly.

Does verified SQL actually reduce hallucination risk?

Yes. It does not remove all errors, but it makes errors visible and correctable before they propagate through planning and reporting workflows.

Who should approve outputs in a verified SQL workflow?

Analysts or domain data owners should approve high-impact metrics, while low-risk exploratory outputs can be consumed with lighter review standards.

Why Mitzu is a strong fit?

Mitzu is a strong fit for teams prioritizing AI analytics verified SQL because it treats transparency as a default workflow behavior, not a hidden expert feature. That enables analysts to validate logic quickly while non-technical teams still get fast answers.

If you are formalizing trust standards this quarter, anchor your rollout on visible SQL and approval tiers by risk class. You can explore Mitzu plans for governed self-serve analytics and choose a practical starting point.