TL;DR

AI agents can query your data warehouse in plain English, but only reliably when a semantic layer connects human language to your actual schema. This guide explains the architecture, the failure points, and what it takes to make AI-driven product analytics trustworthy in production.

TL;DR

AI agents can query your data warehouse in plain English — but only reliably when a semantic layer connects human language to your actual schema. Without it, the agent guesses at column names, invents metric definitions, and returns answers that look correct but aren't. This guide explains the full architecture: what happens between your question and your answer, where the failure points are, and what it takes to make AI-driven product analytics trustworthy in production.

Most product analytics tools now offer a chat bar. Type a question, get a chart. The demo looks seamless. The production reality is different.

The gap between "AI can query your warehouse" and "AI reliably answers your product questions" comes down to one architectural problem that most vendors skip over: raw warehouse tables have no inherent meaning. A column called ev_ts could be an event timestamp or a TV series rating. A table called usr_actions could contain clicks, purchases, or support tickets. Your data team knows what these mean. An AI agent, querying cold, does not.

This guide explains how AI agents actually work when querying a data warehouse, where the failure points are, and what the right architecture looks like for product teams who need answers they can trust. We'll use Mitzu — a warehouse-native agentic analytics platform built around this exact problem — as the concrete example throughout, because the architectural decisions it makes illustrate what reliable AI querying actually requires.

What it actually means for an AI agent to "query your warehouse"?

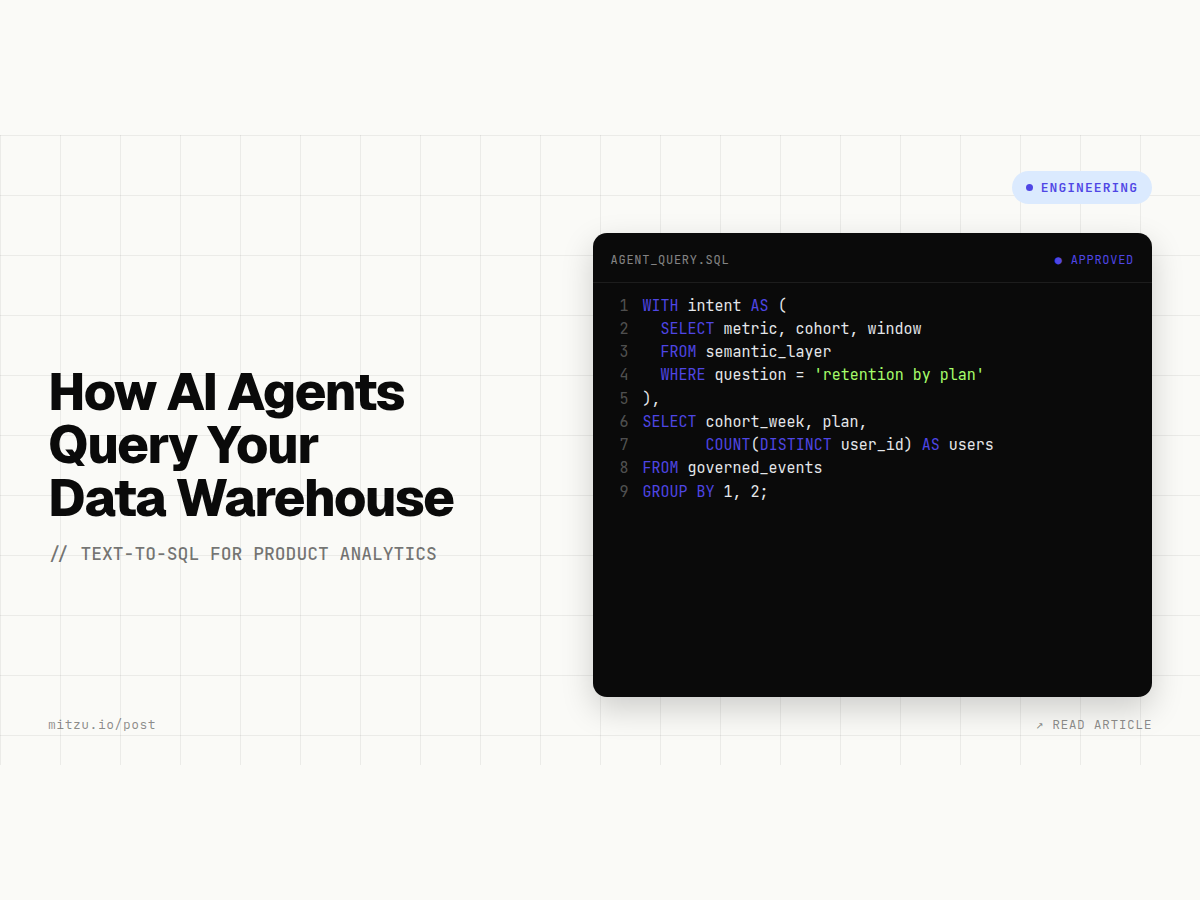

When you ask an AI-powered analytics tool "what was our 30-day retention for users who completed onboarding last quarter?", two distinct things happen in sequence.

Step 1: Natural language → SQL. The AI interprets your question, maps it to schema elements (tables, columns, filters, aggregations), and generates a SQL query. This is the NL-to-SQL step — the one that gets demoed at conferences and featured in press releases.

Step 2: SQL → warehouse execution → answer. The generated query runs against your live warehouse. The result comes back and gets formatted into a chart or a number.

Step 2 is straightforward. Step 1 is where nearly every production deployment runs into trouble.

The NL-to-SQL step requires the AI to answer questions your data model doesn't explicitly state:

- What table contains user events?

- Which column identifies a unique user?

- What events count as "completing onboarding"?

- How is "30-day retention" defined — calendar days or rolling window?

- Which users should be excluded (internal, test accounts)?

A capable LLM will make reasonable guesses. On a clean, well-named schema with a handful of tables, those guesses are often right. On a real production warehouse — with dozens of event tables, snake_case column names, legacy prefixes, and business-specific metric definitions — the guesses are frequently plausible but wrong.

The result is the most dangerous kind of analytics failure: a confident answer that looks correct but isn't.

Here is what the flow looks like in a tool built to handle this correctly. When a product manager asks Mitzu "what was our 30-day retention for users who signed up via organic last month?", Mitzu does not send that question directly to an LLM with a raw schema dump. It first routes the question through a semantic layer — a structured definition of what "30-day retention" means in your data model, which column identifies acquisition channel, and how your user table is structured. Only then does it generate SQL, execute it against your live warehouse, and return both the answer and the exact query that produced it.

The difference between that process and a raw text-to-SQL call is the difference between a result you can publish and one you have to double-check.

Why raw text-to-SQL breaks on product data specifically?

General-purpose text-to-SQL systems face a hard problem on product event data that they handle better on transactional or financial data. Here's why.

Event tables are structurally ambiguous. A page_viewed event carries a user ID, a timestamp, a URL, and a set of properties. None of those properties have universally agreed meanings. Whether properties.source maps to your acquisition channel or your internal routing depends entirely on how your engineers instrumented it. An AI agent has no way to know without being told.

Business concepts don't map 1:1 to columns. "Activation" is not a column. It's a computed concept — perhaps the completion of a sequence of three specific events within seven days of signup. "Monthly active user" could mean any event, or only a specific subset. "Churned" could mean 30 days without a session, or a subscription cancellation event, or both. An LLM generating SQL from scratch has to guess at all of this.

Schema drift compounds the problem. Event schemas change constantly as products ship new features. Column names get renamed, new event types are added, old ones are deprecated. A text-to-SQL model trained or prompted on last month's schema may generate valid SQL that returns wrong answers on this month's data.

A 2026 analysis of enterprise NL-to-SQL deployments found that while models can achieve 80%+ accuracy on standardized benchmarks, enterprise production accuracy drops sharply when questions involve multi-step business logic, ambiguous column semantics, or cross-table joins — which describes most real product analytics questions.

The missing layer: semantic context

The solution is not a smarter LLM. It's a layer of structured business context that sits between the AI and the raw warehouse schema.

This is what the industry calls a semantic layer — a mapping of human business concepts to the underlying data structures that implement them. It answers the questions that raw SQL can't:

| Human concept | Semantic layer definition |

|---|---|

| "activated user" | completed onboarding_completed event within 7 days of user_created |

| "30-day retention" | returned session within 30 days of first session, rolling window |

| "acquisition channel" | utm_source from first session, with fallback to referrer_domain |

| "churned" | no session in past 30 days AND no active subscription in subscriptions table |

| "product-qualified lead" | reached activation AND plan = 'free' AND company_size > 10 |

When an AI agent has access to a semantic layer like this, NL-to-SQL becomes far more reliable. The agent doesn't need to guess what "activated user" means — it looks up the definition and constructs SQL accordingly. If the definition changes (engineering updates the onboarding flow), the semantic layer gets updated once, and every downstream AI query immediately reflects that change.

This is also why tools like dbt Semantic Layer and Cube exist: not to make SQL easier to write, but to make the business logic portable and consistent across every system that consumes your data — including AI agents.

Mitzu builds its semantic layer directly on top of your warehouse, with native dbt integration so that metric definitions you've already written in dbt flow through automatically. When a PM asks a question, the AI operates within the boundaries that your data team has defined — it cannot invent a metric that hasn't been modeled, and it cannot join tables in ways that violate your data model. That constraint is a feature, not a limitation. It is the mechanism that keeps AI answers trustworthy at scale.

How warehouse-native analytics makes AI querying reliable?

The architecture matters as much as the semantic layer. There are two fundamentally different ways an AI tool can give you answers from your warehouse data:

Copy-and-process architecture: Your warehouse syncs a copy of events to the analytics tool's own database. The AI queries that copy. This is how Amplitude, Mixpanel, and most traditional product analytics tools work, even those with warehouse connectors.

Direct-query architecture: The AI tool connects to your warehouse directly and runs queries against live data. No copy is made. This is what warehouse-native tools like Mitzu do.

The difference matters for AI reliability in two ways.

First, freshness. Copied data is always behind. If your sync runs every 6 hours, an AI agent answering "why did signups drop this morning?" is working with stale data. In a direct-query architecture, the answer reflects the warehouse state at the moment you asked.

Second, governance. When data is copied to a third-party system, your warehouse access controls don't carry through. Columns that are masked or row-level-secured in Snowflake or BigQuery are accessible in full in the analytics tool's copy. In a direct-query architecture, the same permissions that govern your warehouse govern the AI's queries.

When these two things combine — a semantic layer that defines business concepts, and a direct-query architecture that runs AI-generated SQL against live governed data — you get the architecture that makes AI product analytics actually trustworthy.

What kinds of product questions can AI reliably answer?

Not every question is equal. Here's a realistic map of where AI query reliability sits today, given a well-configured semantic layer:

| Question type | Reliability | Notes |

|---|---|---|

| "What was our 7-day retention last month?" | High | Single metric, well-defined window |

| "Show me the funnel from signup to activation, by cohort week" | High | Multi-step but deterministic once defined |

| "Which features correlate with 90-day retention?" | Medium | Correlation is computable; causation requires human judgment |

| "Why did our activation rate drop 12% last Tuesday?" | Medium | Root cause requires multi-hypothesis investigation; AI can surface candidates, not conclusions |

| "What should we build next to improve retention?" | Low | Strategic judgment, not an analytics computation |

| "Which users are at risk of churning this month?" | Medium–High | Depends on how "churn risk" is modeled in the semantic layer |

| "Compare retention for users acquired via paid vs. organic in Q1" | High | Segmented metric, well-scoped |

The pattern: deterministic questions with well-defined metrics return reliable answers. Open-ended root cause and strategic questions still require human reasoning — AI can accelerate the data retrieval step, not replace the analytical judgment step.

This is a useful filter when evaluating any AI analytics tool. Ask your vendor: "Can you answer this specific question against my actual schema?" Then inspect the SQL it generated. If the SQL is wrong or the vendor won't show it to you, the reliability story is incomplete.

Mitzu shows the full SQL behind every answer as a first-class feature — not buried in a debug panel, but visible inline alongside the result. The reasoning: product managers shouldn't have to trust a black box, and data teams shouldn't have to audit answers they can't inspect. When a number looks wrong, you click through to the query, find the issue, and fix the semantic definition — no support ticket required.

Practical setup: what you need before AI can query your product data

Teams that get AI product analytics working reliably in production have typically done four things before they turned on the AI layer.

- Clean event schema with agreed naming conventions. Events should have consistent names (

user_signed_up, notsignup_evin one table anduser.registerin another), and properties should be documented. This doesn't need to be perfect — but it needs to be consistent enough that a human can explain what each event means. - Defined core metrics in a semantic layer. The five or ten metrics that matter most to your product team — activation rate, 7-day retention, funnel conversion, MAU — should be formally defined. This is the work that most teams skip and then blame the AI tool for when answers are wrong.

- Warehouse access with appropriate permissions. The AI tool needs read access to the relevant tables. In a direct-query architecture, your existing row-level security and column masking apply automatically. Verify this before assuming.

- A validation process for AI-generated queries. Even with a good semantic layer, some AI queries will be wrong. The right governance model is not "trust every answer" or "require a human to approve every query." It's a middle path: routine, deterministic queries run automatically; edge cases and unusual queries queue for analyst review before execution. Mitzu's analyst approval workflow implements this pattern.

Teams that skip step 2 — metric definitions — consistently report the most AI reliability problems. The AI is not at fault. It is amplifying the lack of a shared definition that already existed. The good news: shipping an AI analytics layer often forces the metric definition conversation that data teams have been deferring for years.

The governance question: who controls what the AI can see?

One question that enterprise buyers ask immediately when hearing "AI can query your warehouse" is: what stops it from seeing data it shouldn't?

In a copy-based architecture, the answer is typically: the analytics tool's own access controls — which are separate from your warehouse permissions, need to be configured separately, and are often incomplete for complex permission models.

In a direct-query, warehouse-native architecture, the answer is: the same controls that govern everything else in your warehouse. If a column is masked for a given role in Snowflake, an AI query running under that role sees the masked version. If row-level security restricts a user to their own organization's data in BigQuery, the AI query respects that restriction automatically.

This is not a minor architectural detail. For any company handling personal data, health data, or financial data, the governance gap in copy-based architectures is a real compliance risk. It is also a sales blocker: enterprise procurement teams increasingly ask "where does the AI run?" as part of vendor security reviews. "On your live warehouse, with your existing permissions" is a much cleaner answer than "on a copy in our cloud."

Mitzu's architecture answers this question cleanly. Queries run through your warehouse service account under your existing IAM roles and row-level security policies. There is no Mitzu-side copy of your data. If a column is masked in Snowflake, it is masked in Mitzu's AI output.

If a user role in BigQuery is restricted to a specific dataset, that restriction holds through every AI query that user runs. The security review conversation changes from "explain your data handling policies" to "show them the same Snowflake role they already approved."

FAQ

What is the difference between text-to-SQL and an AI agent querying a warehouse?

Text-to-SQL converts a natural language question into a SQL query, which a human or system then executes. An AI agent that queries a warehouse goes further: it plans the query, executes it, validates the result, and may take additional steps (like drilling into a sub-question) before returning a final answer. The agent behavior adds autonomy; the text-to-SQL step is still the core technical challenge.

Why does AI product analytics need a semantic layer?

Because product event tables don't carry their own meaning. Column names, event types, and table structures are engineering decisions that encode business logic — but that logic lives in the heads of the data team, not in the schema. A semantic layer makes that logic explicit and machine-readable, so an AI agent can generate correct SQL without guessing at what your business concepts mean.

Can I use ChatGPT or Claude directly to query my warehouse?

General LLMs can generate SQL from natural language, and with the right MCP setup they can execute it against your warehouse. The limitation is governance and reliability: general models have no access to your semantic layer, no awareness of your metric definitions, and no mechanism for analyst review of generated queries. For ad-hoc technical exploration, this works. For self-serve product analytics at team scale, purpose-built tools with semantic layers are more reliable.

Does the AI see all my data?

In a direct-query warehouse-native architecture, the AI runs queries with the permissions of the connected service account or user role — the same permissions that apply to any other query. It sees exactly what that role is allowed to see, nothing more. In copy-based architectures, data is transferred to the vendor's systems and governed by their access model separately from your warehouse.

How long does it take to set up AI warehouse querying for product analytics?

With a warehouse already in place and a reasonably clean event schema, connecting a warehouse-native tool like Mitzu takes under 10 minutes. Defining the semantic layer — mapping business concepts to your actual schema — takes longer and depends on your team's existing documentation. Teams with well-maintained dbt models typically complete semantic configuration in a few hours. Teams starting from scratch may spend a day or two on metric definitions before AI reliability reaches production quality.

What questions should I ask any AI analytics vendor during evaluation?

Three questions cut through most demos: (1) Show me the SQL you generated for this specific question against my actual schema. (2) What happens when the AI generates wrong SQL — how does your system catch it? (3) Where is my data processed — on my warehouse, or on your servers?

Key Takeaways

- AI agents query your warehouse in two steps: NL-to-SQL generation, then warehouse execution. Step 1 is where reliability is won or lost.

- Raw text-to-SQL breaks on product data because event tables carry no inherent meaning — business concepts like "activation" and "churn" must be explicitly defined.

- A semantic layer that maps human concepts to schema elements is the prerequisite for reliable AI analytics, not an optional enhancement.

- Warehouse-native, direct-query architecture preserves your existing governance and reflects live data — copy-based architectures do neither.

- AI reliably answers deterministic product analytics questions (retention, funnels, segmentation). Root cause analysis and strategic judgment still require human reasoning.

- The right governance model for AI-generated queries is not full automation or full manual review — it's a middle path where routine queries run automatically and edge cases queue for analyst approval.

How to get started with Mitzu?

Mitzu connects directly to Snowflake, BigQuery, Databricks, Redshift, and other warehouses. You define your semantic layer — what your events mean, how your metrics are calculated — and product managers can immediately ask questions in plain English. Queries run against your live warehouse. The SQL is always visible.

- Connect your data warehouse → point Mitzu at your warehouse with read credentials.

- Define your events → map your event tables to business concepts: what counts as a session, an activation, a funnel step.

- Start analyzing → ask questions in plain English. Inspect the SQL behind every answer. Set up Slack alerts for metric anomalies.

Start free → No demo call required. Under 10 minutes to first query.