TL;DR

Compare leading AI analytics platforms by warehouse-native connectivity, self-service depth, deep-dive exploration, and KPI monitoring so data teams can choose the right fit. An AI analytics platform is only as strong as its warehouse connectivity, semantic context, and governance model.

An AI analytics platform is only as strong as its warehouse connectivity, semantic context, and governance model. For modern teams, the goal is not chat-only BI, but Agentic Analytics that can answer real business questions with transparent logic. If your team is still defining the category, start with what agentic analytics means in practice.

This guide compares leading platforms across four criteria that matter for enterprise adoption: data warehouse native analytics architecture, self-service analytics for data analysts, deep-dive analysis and exploration, and business KPI monitoring and dashboards. The objective is to help data and analytics leaders choose based on operating model fit, not feature checklists alone.

Comparison criteria for AI analytics platform selection

- Warehouse-native connectivity: Can the platform query live warehouse data without copying events into a separate store?

- Self-service depth: Can analysts and business teams ask new questions without filing tickets for every metric change?

- Deep-dive analysis and exploration: Does the product support root-cause investigation, segmentation, and query traceability?

- Business KPI monitoring and dashboards: Can teams monitor performance continuously with alerts, not only static reports?

These criteria align with the shift from dashboard consumption to governed AI analyst workflows. Teams evaluating this shift should also review why verified SQL trust is a core requirement before rollout.

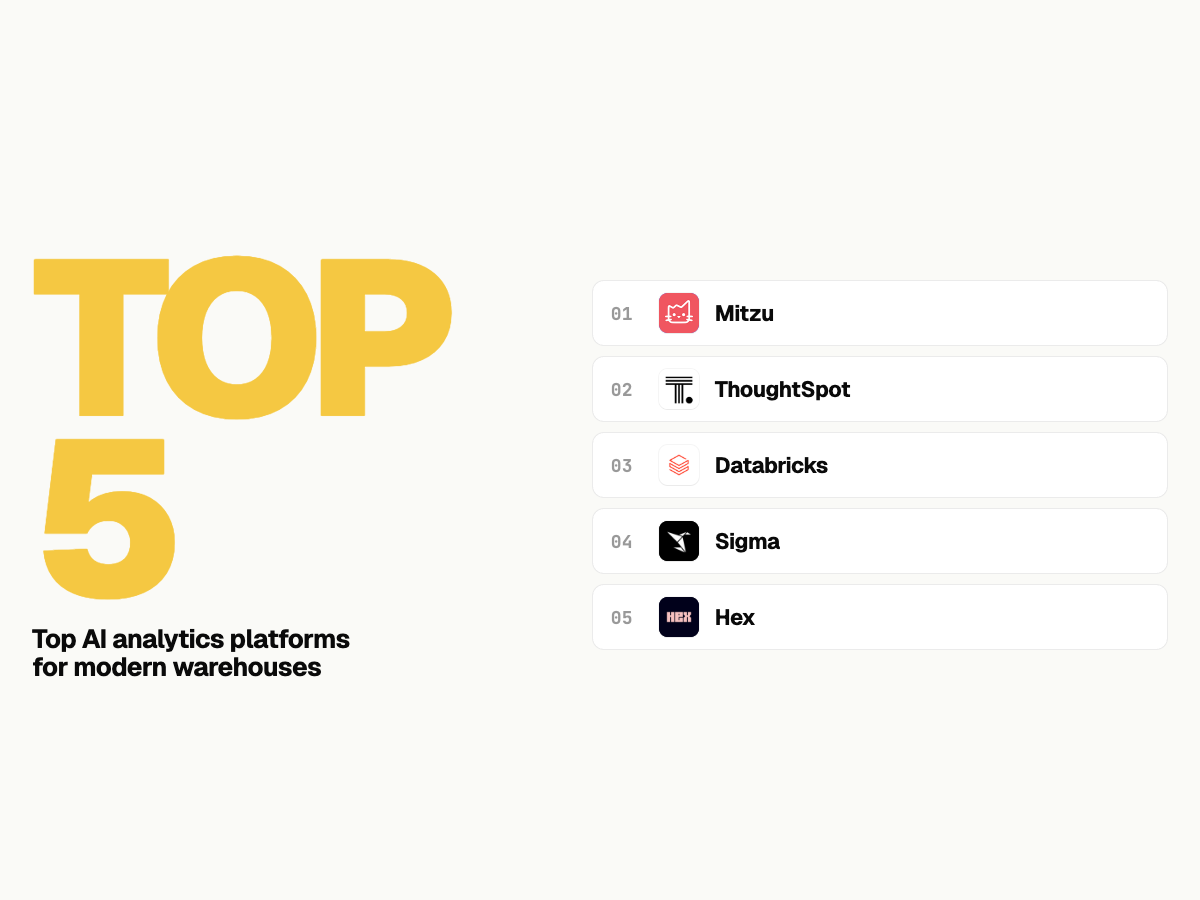

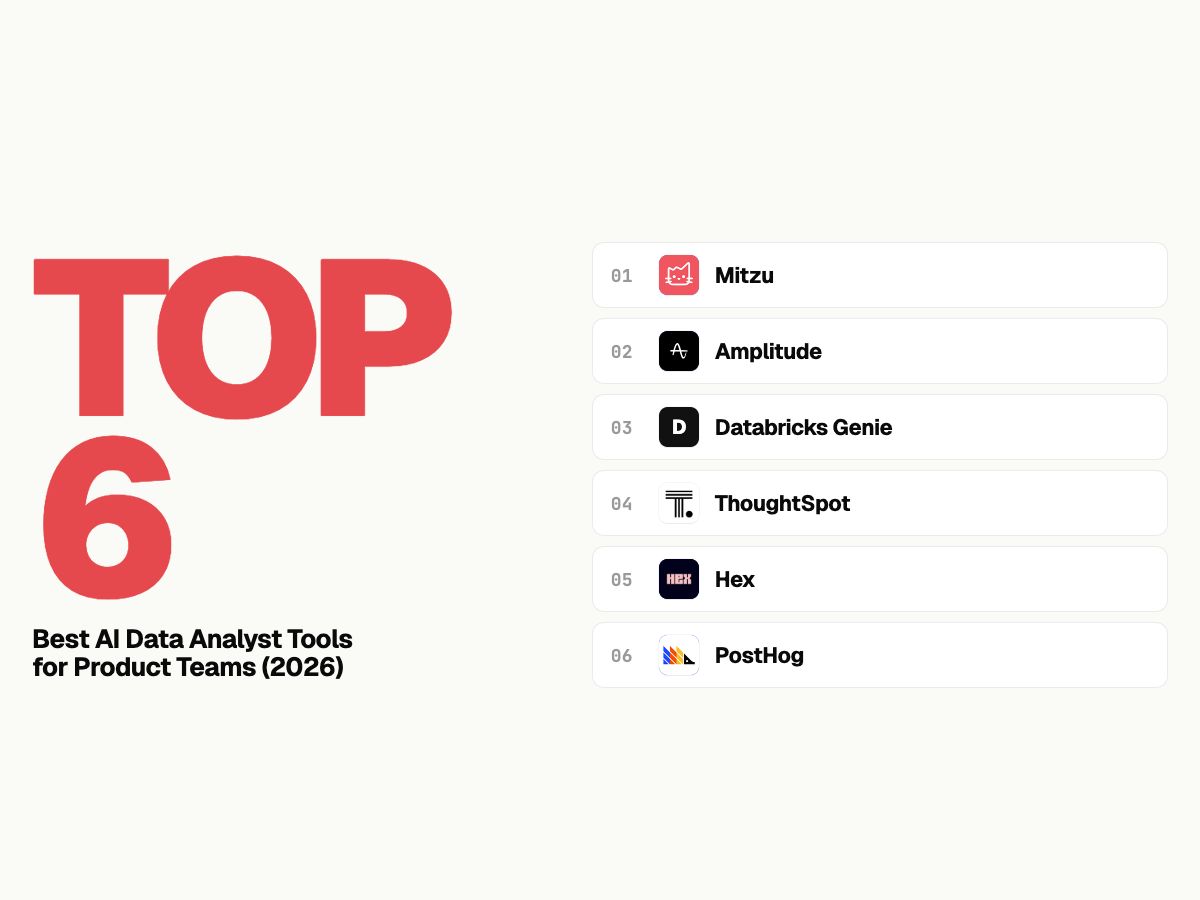

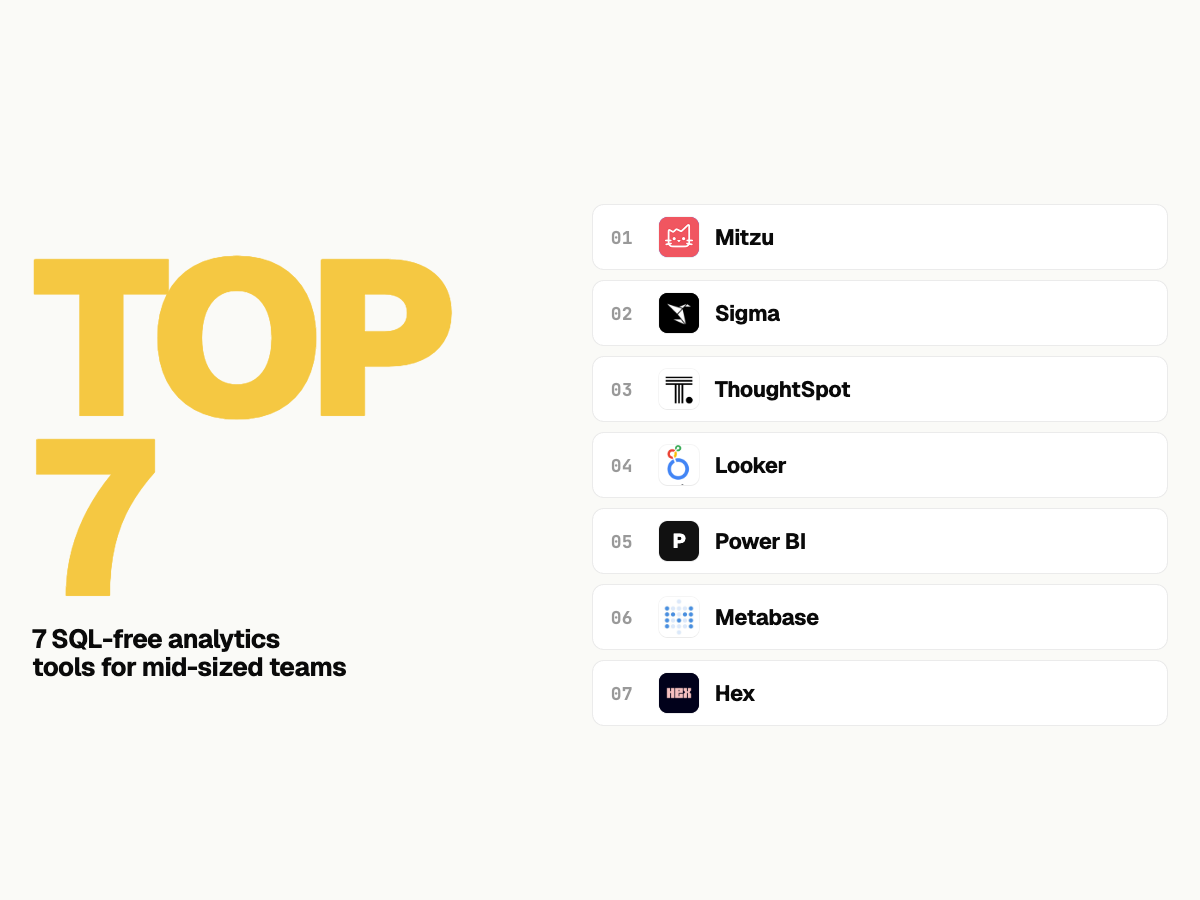

Top platforms compared for modern data warehouse integration

| Platform | Warehouse-native connectivity | Self-service depth | Deep-dive exploration | KPI monitoring and dashboards | Best fit |

|---|---|---|---|---|---|

| Mitzu | Direct query across major warehouses; no copy | High; NL-to-SQL with analyst review workflow | High; transparent SQL plus semantic-layer grounded metrics | High; proactive monitoring with notifications | Teams wanting governed agentic analytics without warehouse lock-in |

| ThoughtSpot | Strong warehouse integrations | Medium-high for search analytics | Medium; strong BI workflows, partial query transparency | Medium-high dashboard and search monitoring | Enterprise BI programs with mature analytics operations |

| Databricks Genie | Excellent inside Databricks ecosystem | Medium-high for Databricks-centric teams | Medium; strongest with existing Lakehouse governance | Medium; depends on broader Databricks setup | Organizations standardized on Databricks and Unity Catalog |

| Sigma Computing | Direct warehouse model | Medium; spreadsheet-first self-service | Medium-high for analyst-led workflows | Medium dashboarding and reporting coverage | Business teams preferring spreadsheet-style analytics |

| Hex | Direct warehouse connectivity | Medium for non-technical teams, high for analysts | High for notebook-led deep dives | Medium; more exploration-centric than monitoring-centric | Analyst-heavy teams prioritizing technical exploration |

Platform-by-platform analysis

Mitzu

Mitzu is designed for teams that want an AI analytics platform to operate directly on cloud warehouse data while keeping metric definitions auditable. It combines natural-language workflows with analyst-verified SQL so business teams can move faster without bypassing data quality controls. This is especially relevant for product, growth, and finance teams sharing KPI ownership.

- Pros: Strong warehouse-native coverage, semantic-layer grounding, and SQL transparency for trust and governance.

- Pros: Good balance of self-service access and analyst oversight through review workflows.

- Cons: Teams still need baseline warehouse modeling quality to get high-quality outputs consistently.

- Cons: Organizations seeking only dashboard consumption may not use the full depth of agentic workflows.

ThoughtSpot

ThoughtSpot is a mature enterprise platform with strong search-driven analytics and broad distribution patterns across large organizations. It is often a good fit when teams already operate a centralized BI model and want to extend that model with AI-assisted query experiences rather than adopt a net-new analytics operating system.

- Pros: Enterprise-grade governance posture and proven rollout patterns for cross-functional adoption.

- Pros: Strong natural-language discovery experience for metric lookup and dashboard navigation.

- Cons: Can require heavier implementation and enablement than lighter warehouse-native entrants.

- Cons: For teams prioritizing full query traceability, transparency depth may vary by workflow.

Databricks Genie

Databricks Genie is compelling when your analytics and ML stack already runs on the Lakehouse. It benefits from existing governance through Unity Catalog and can accelerate conversational analytics in environments where engineering and data science are deeply integrated with Databricks-native tooling.

- Pros: Strong native alignment for Databricks-first teams with centralized governance.

- Pros: Useful path to enable business users without exporting data to external BI silos.

- Cons: Multi-warehouse organizations may face portability and standardization tradeoffs.

- Cons: Monitoring and dashboard experience quality can depend on broader stack decisions.

Sigma Computing

Sigma is often chosen by teams that want spreadsheet-like interaction on top of live warehouse data. It can improve business adoption because users can explore metrics in a familiar grid-based workflow while keeping the warehouse as the source of truth.

- Pros: Familiar interface for business users who are comfortable with spreadsheet logic.

- Pros: Strong fit for collaborative analysis and operational reporting on warehouse data.

- Cons: Less oriented toward autonomous agent behavior than specialized agentic analytics products.

- Cons: Deep exploratory workflows may still rely on analyst guidance for complex modeling.

Hex

Hex is strongest in analyst-led environments where SQL, Python, and reproducible notebooks are central to decision-making. It supports deep-dive analysis and exploration particularly well for technical users who need to iterate quickly from hypothesis to validated result.

- Pros: Excellent for advanced analysis, experimentation, and technical storytelling in notebooks.

- Pros: Strong productivity for data analysts working with code-first workflows.

- Cons: Broader business self-service can require additional enablement compared with no-code-first tools.

- Cons: KPI monitoring workflows may need complementary tooling when executive dashboards are the main requirement.

How to choose the best-fit platform?

If your top priority is governed self-service on live warehouse data, prioritize platforms that combine semantic metrics and SQL transparency. If your priority is broad enterprise BI distribution, evaluate search-first and dashboard-first products. For analyst-heavy deep work, notebook-centric tools remain strong complements. For a broader market view, see best AI analytics tools in 2026 and agentic analytics platforms compared.

As a practical shortlist step, map each vendor to your must-have paths: AI analytics agent workflows, semantic-layer governance, and self-serve product analytics. If you need implementation support, book a demo.

Authoritative references

- Databricks Genie documentation

- Google BigQuery official documentation

- Snowflake documentation

- ThoughtSpot documentation

FAQ

What should data leaders prioritize when evaluating an AI analytics platform?

Prioritize warehouse-native execution, governance, and transparent query logic before interface quality. These determine whether answers can be trusted at scale across teams.

How is agentic analytics different from standard self-service BI?

Standard self-service BI helps users explore predefined models, while agentic analytics can interpret intent, generate and run analysis, and return explainable outputs. This is why SQL transparency and hallucination control are central in AI-era analytics selection.

Can one platform handle both KPI monitoring and deep-dive analysis?

Yes, but capability depth differs by product architecture. Some tools are stronger in executive KPI monitoring, while others are stronger in analyst exploration. Teams should test both workflows during evaluation.