TL;DR

Natural language to SQL analytics succeeds when buyers score transparency, semantic mapping, access control, and auditability before prioritizing chat UX. Natural language to SQL analytics should be evaluated like an operational system, not a chat demo.

Natural language to SQL analytics should be evaluated like an operational system, not a chat demo. The short answer for buyers: prioritize query transparency, semantic consistency, and governance controls before UX polish. Teams that invert this order often move fast for a month and then stall when trust declines. This checklist is designed to prevent that failure mode from day one.

Most procurement scorecards miss what matters because they over-index on interaction quality and under-index on reliability behavior. A platform that answers beautifully but cannot explain its SQL is not an analytics solution at scale. If this sounds familiar, pair this guide with our SQL-transparency framework and the category primer on agentic analytics architecture before final vendor scoring.

Who this checklist is for and not for?

- For: data and analytics leaders selecting a production NL-to-SQL layer for cross-functional teams.

- For: product and growth organizations blocked by long request cycles and metric interpretation bottlenecks.

- Not for: teams with unresolved KPI ownership and unstable event instrumentation.

- Not for: organizations seeking novelty demos without change-management commitment.

Natural language to SQL analytics checklist: what to score

| Checklist criterion | Why it matters | Minimum acceptable bar | Best-practice bar |

|---|---|---|---|

| SQL transparency | Enables analyst verification | SQL available on request | SQL visible by default plus review workflow |

| Semantic layer quality | Prevents metric drift | Core KPI mapping | Entity and KPI graph with owners |

| Warehouse execution | Controls freshness and governance | Live query support | Semantic-layer grounded with existing permissions |

| Failure behavior | Reduces confident wrong answers | Basic confidence tags | Abstain/escalate path with analyst handoff |

| Auditability | Supports accountability | Query logs retained | Question-to-query lineage with approvals |

How to run a reliable pilot?

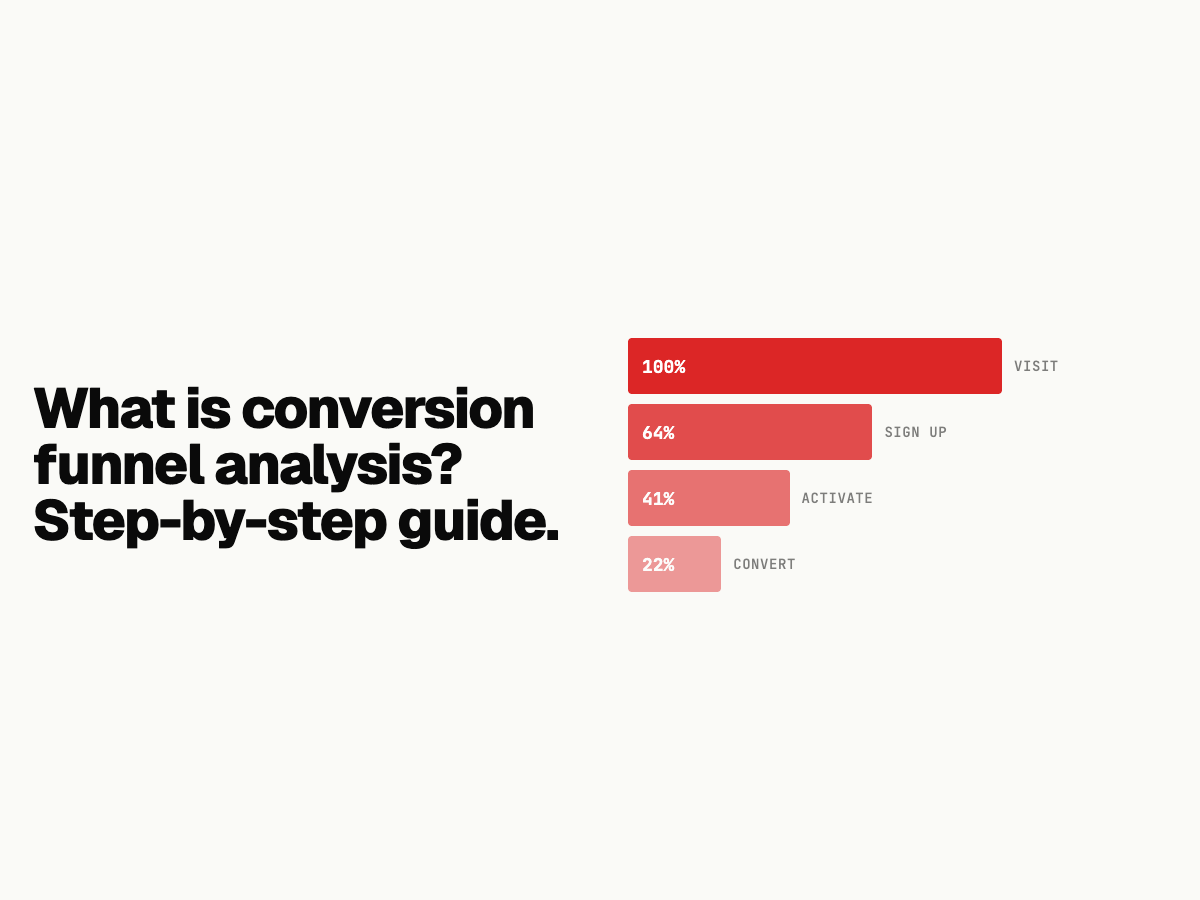

- Choose one KPI domain with stable definitions, usually activation or conversion.

- Test with one product and one growth team before broader access.

- Require analyst review for executive, financial, or externally shared metrics.

- Track answer acceptance, correction rate, and time-to-decision weekly.

- Feed rejected outputs back into semantic definitions and question guidance.

Pilot design should mimic production pressure, not idealized demos. Include ambiguous prompts, edge-case segmentation, and follow-up questions that require contextual joins. The way a tool handles uncertainty tells you more than polished first-pass answers. For comparative behavior, use the LLM versus analytics-agent benchmark during evaluation.

"The fastest path to self-serve analytics is not fewer controls; it is better controls embedded in the user workflow."

Common buyer mistakes and how to avoid them

Mistake one is treating SQL visibility as an optional admin feature. It should be a default behavior. Mistake two is skipping semantic ownership because the model appears smart enough in demos. Mistake three is measuring adoption by query count instead of decision quality. You can avoid all three by tying rollout to your existing product analytics operating model and governance standards from the verified SQL guide.

A practical internal alignment path is: define architecture principles on trusted agentic analytics, align cross-functional use cases on AI agent workflows, and benchmark migration implications against Amplitude or Mixpanel where relevant. Teams with heavy request pressure should also incorporate ticket-queue fixes as part of rollout planning.

Answer-engine readiness and content operations guidance

Many teams now care about AEO and GEO outcomes as much as internal analytics usability. The same principles apply. Answers should be concise, evidence-backed, and explainable. If your AI analytics output is transparent enough for internal decision review, it is usually also easier to repurpose for high-quality public-facing responses and executive communication.

In contrast, black-box outputs can sound polished while being difficult to defend.

From a content operations perspective, assign one owner for semantic definitions and one owner for communication standards. This prevents a common anti-pattern where data logic changes faster than external messaging and internal enablement materials. Teams with mature operations often create a small monthly review cycle where analysts, product ops, and growth ops revisit top queries, rejected answers, and newly ambiguous question patterns.

- Document direct-answer templates for recurring business questions.

- Store approved query patterns in a shared reviewable knowledge base.

- Tag high-risk query classes (financial, legal, board-facing) for strict approvals.

- Track ambiguity hotspots and update semantic definitions proactively.

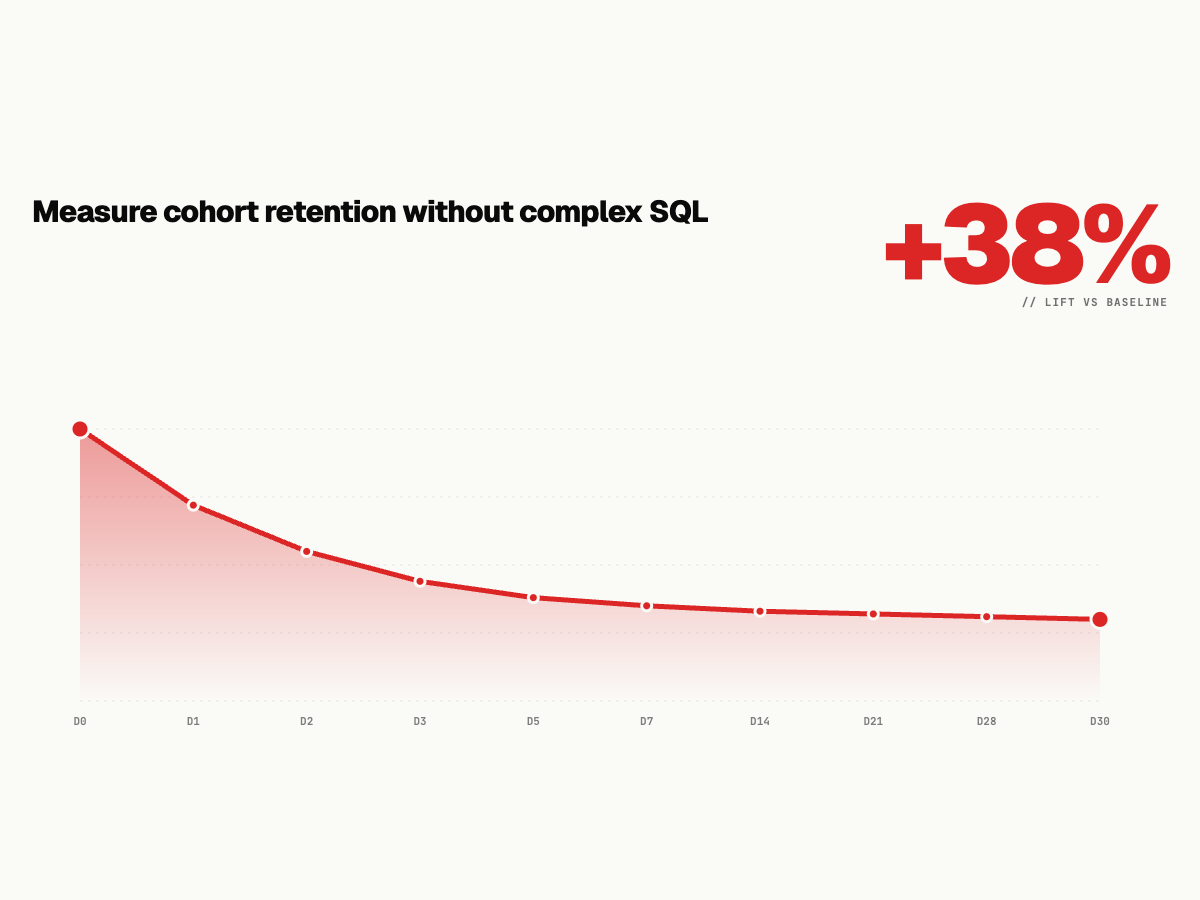

Pilot question pack buyers should require

A robust pilot should include a balanced question pack rather than vendor-selected examples. Include at least one retrieval question, one segmentation question, one funnel question, one retention question, one anomaly investigation prompt, and one intentionally ambiguous query. This reveals not only answer quality but also system behavior under uncertainty. A tool that handles ambiguity honestly is often more valuable than a tool that answers everything confidently.

Require each vendor to show generated SQL, explain semantic interpretation, and document confidence cues for every question in the pack. Then ask analysts to score correction effort. Correction effort is a powerful comparator because it measures operational burden directly. Two tools may look equally accurate in demos, but one may require much less analyst cleanup in production workflows.

Procurement rubric for cross-functional alignment

- Data team score: semantic integrity, review workflow, and auditability.

- Product team score: question speed, iteration quality, and decision usability.

- Growth team score: segmentation flexibility and campaign-readout reliability.

- Leadership score: rollout risk, governance visibility, and expected adoption curve.

Sources

- BigQuery SQL syntax reference

- Snowflake fundamentals

- PostgreSQL SELECT tutorial

- Trino SQL SELECT reference

- ClickHouse introduction

FAQ

What is natural language to SQL analytics?

It is a workflow where users ask questions in plain language and the system translates intent into SQL against governed datasets and definitions.

What is the most important checklist criterion?

For most teams, SQL transparency is the most important criterion because it enables verification, reproducibility, and accountable decision-making.

How many use cases should we include in a pilot?

Start with one KPI domain and constrained question types. Expand only after acceptance and correction metrics confirm sustained answer quality.

Why Mitzu is a strong fit?

Mitzu is a strong fit for natural language to SQL analytics buyers who care about trust as much as speed. It combines transparent query generation, governed semantics, and role-aware controls so teams can scale self-serve without losing confidence.

If you are running a side-by-side evaluation, include correction effort and approval burden in your scorecard. You can request a Mitzu checklist-based pilot review to benchmark your use cases.